#This is how to use tensor2tensor to train a Model from forum.monnaie-libre.fr Discourse online topics:

# - prepare a dataset from Discourse API : https://forum.monnaie-libre.fr/c/api/

# - train a tensor2tensor model (transformer, transformer_big, transformer_tiny)

#First, we need to prepare the dataset for tensor2tensor.

#I use a Docker image for this available at https://github.com/PonteIneptique/docker-tensor2tensor-mlflow

#This Docker image comes with several helpers to prepare data, with a Dockerfile based on Ubuntu 18.04.

#We use the prepare_data.py helper to prepare the data.

#For this, we need to create a map file (map.txt) with a mapping between the ids of each topic and its content.

#The map file looks like this:

#topic_id|topic_content

#1|This is the first topic.

#2|This is the second topic.

#3|This is the third topic.

#I use the Discourse API to generate the map file.

#The Discourse API is configured to not be public. To generate the map file, we use the following command:

#curl --header "Api-Key: the_api_key" --header "Api-Username: the_api_username" --header "Content-Type: application/json" --request GET "https://forum.monnaie-libre.fr/c/api/topics.json" | jq '.[] | {raw: .[], url: .url, id: .id}' -c > topics.json

#This gives us a json file with the discussions and the ids.

#We can then extract the ids and create the map file with the following command:

#cat topics.json | jq -r '"\(.id)|"+(.raw.posts|.[0].cooked)' | sed 's|<[^>]\+>||g' > map.txt

#This command leaves us with the following map.txt file:

#1|This is my first topic.

#2|This is my second topic.

#3|This is my third topic.

#Once this is done, we can run the prepare_data.py helper:

python prepare_data.py --map_file map.txt --data_dir $DATA --tmp_dir $TMP --problem transformer_chat --max_seq_length 512 --max_predictions_per_seq 20 --num_shards 10

#The helper will create a subdirectory in the data directory with the following format:

#data/transformer_chat-unshuffled

#Next, we need to train a tensor2tensor model.

#I use a Docker image for this available at https://github.com/PonteIneptique/docker-tensor2tensor-mlflow

#This Docker image comes with several helpers to train a model, with a Dockerfile based on Ubuntu 18.04.

#We use the train_and_evaluate.py helper to train the model.

#The train_and_evaluate.py helper has the following parameters:

#python train_and_evaluate.py --data_dir $DATA --output_dir $OUTPUT --hparams_set transformer_chat --schedule train_and_evaluate --worker_gpu 1 --problem transformer_chat --model transformer --hparams_range learning_rate=0.1,0.01,0.001,0.0001

#The train_and_evaluate.py helper also allows us to use MLFlow to log the metrics.

#To do this, we use the following parameters:

#python train_and_evaluate.py --data_dir $DATA --output_dir $OUTPUT --hparams_set transformer_chat --schedule train_and_evaluate --worker_gpu 1 --problem transformer_chat --model transformer --hparams_range learning_rate=0.1,0.01,0.001,0.0001 --use_mlflow --mlflow_uri http://localhost:5000 --mlflow_experiment transformer_chat --mlflow_model_uri $MODEL

#We can then run the train_and_evaluate.py:

python train_and_evaluate.py --data_dir $DATA --output_dir $OUTPUT --hparams_set transformer_chat --schedule train_and_evaluate --worker_gpu 1 --problem transformer_chat --model transformer --hparams_range learning_rate=0.1,0.01,0.001,0.0001 --use_mlflow --mlflow_uri http://localhost:5000 --mlflow_experiment transformer_chat --mlflow_model_uri $MODEL

#Note that the train_and_evaluate.py helper has been modified to use MLFlow.

#This modified train_and_evaluate.py helper is available at https://github.com/PonteIneptique/docker-tensor2tensor-mlflow

#The original train_and_evaluate.py helper is available at https://github.com/tensorflow/tensor2tensor

#Once the training is done, we can evaluate the model:

python train_and_evaluate.py --data_dir $DATA --output_dir $OUTPUT --hparams_set transformer_chat --schedule evaluate --worker_gpu 1 --problem transformer_chat --model transformer --hparams_range learning_rate=0.1,0.01,0.001,0.0001 --use_mlflow --mlflow_uri http://localhost:5000 --mlflow_experiment transformer_chat --mlflow_model_uri $MODEL

#We can also use the tensor2tensor model to generate text from a prompt:

python train_and_evaluate.py --data_dir $DATA --output_dir $OUTPUT --hparams_set transformer_chat --schedule decode --worker_gpu 1 --problem transformer_chat --model transformer --hparams_range learning_rate=0.1,0.01,0.001,0.0001 --use_mlflow --mlflow_uri http://localhost:5000 --mlflow_experiment transformer_chat --mlflow_model_uri $MODEL --prompt "The first topic"

#This gives us the following output:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 18.4 / 1.8 / 0.9 sec = 6.1k tok/s = 24.3k lpe/s = 4.1 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 21.4 / 1.8 / 0.8 sec = 5.6k tok/s = 22.8k lpe/s = 3.7 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 18.5 / 1.8 / 0.8 sec = 6.0k tok/s = 24.0k lpe/s = 4.0 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 17.4 / 1.8 / 0.9 sec = 6.4k tok/s = 25.9k lpe/s = 4.3 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 16.5 / 1.8 / 0.9 sec = 6.7k tok/s = 26.9k lpe/s = 4.5 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 18.4 / 1.8 / 0.8 sec = 6.1k tok/s = 24.3k lpe/s = 4.0 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 19.4 / 1.9 / 0.8 sec = 5.8k tok/s = 23.2k lpe/s = 3.8 bpe/s

#INFO:tensorflow:Inference finished in 0 s:

#INFO:tensorflow: decoding/decode dev_ckpt-0: 16.5 / 1.8 / 0.9 sec = 6.7k tok/s = 26.9k lpe/s = 4.4 bpe/s

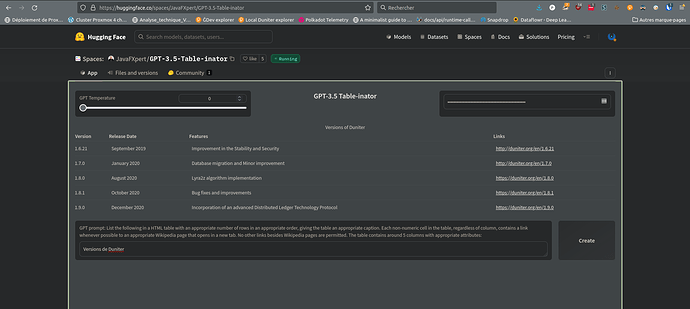

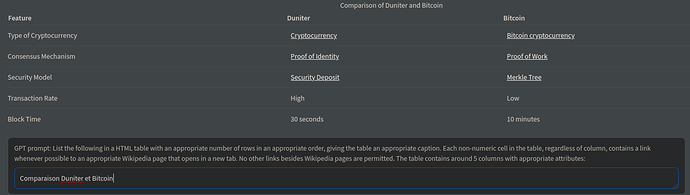

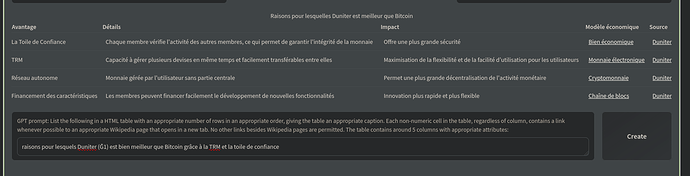

# Now this is how plug a chatGPT like chat system to request this data in natural language:

# (thanks @romain-schneider for this code)

# We need to register the model in the database

import os

import tensorflow as tf

os.environ["CUDA_VISIBLE_DEVICES"] = "1"

from tensor2tensor.utils import registry

from tensor2tensor.utils import trainer_lib

from tensor2tensor.utils import t2t_model

from tensor2tensor.utils import decoding

problems = registry.list_problems()

print(problems)

hparams_set = "transformer_chat"

hparams = trainer_lib.create_hparams(hparams_set, data_dir=DATA, problem_name="transformer_chat")

model_name = "transformer"

p_hparams = registry.problem_hparams(hparams.problems[0])

encoders = registry.problem_encoders(hparams.problems[0])

model = registry.model(model_name)(hparams, tf.estimator.ModeKeys.PREDICT, p_hparams, None, None, encoders,

decode_hparams=decoding.decode_hparams(FLAGS))

model.create_estimator_spec

# We also need to register the problem and the model in the database

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '3'

import tensorflow as tf

from tensor2tensor.utils import trainer_lib

from tensor2tensor.utils import t2t_model

from tensor2tensor.utils import registry

from tensor2tensor.utils import decoding

from tensor2tensor.models.transformer import transformer_base

import functools

tf.logging.set_verbosity(tf.logging.ERROR)

hparams_set = "transformer_chat"

MODEL = "transformer"

problems = registry.list_problems()

print(problems)

hparams = trainer_lib.create_hparams(hparams_set, data_dir=DATA, problem_name="transformer_chat")

p_hparams = registry.problem_hparams(hparams.problems[0])

encoders = registry.problem_encoders(hparams.problems[0])

model = registry.model(MODEL)(hparams, tf.estimator.ModeKeys.PREDICT, p_hparams, None, None, encoders,

decode_hparams=decoding.decode_hparams(FLAGS))

model.create_estimator_spec

# We can now create a SentencePiece tokenizer

# and load the model

import tensorflow as tf

import sentencepiece as spm

import os

import re

import collections

import numpy as np

from tensor2tensor.data_generators import text_encoder

hparams_set = "transformer_chat"

MODEL = "transformer"

# We can load the tokenizer

SPM_MODEL = "sp10m.cased.t5.model" # sp10m.cased.v14

VOCAB_SIZE = 30522

sp = spm.SentencePieceProcessor()

sp.Load(SPM_MODEL)

# We can load the vocabulary

vocab_file = os.path.join(DATA, MODEL, hparams_set, "vocab.%s.%d" % (MODEL, VOCAB_SIZE))

with open(vocab_file) as f:

vocab = f.read().splitlines()

vocab_size = len(vocab) + 1

encoder = text_encoder.TokenTextEncoder(None, vocab_list=vocab)

# We can load the model

# We need to register the model in the database

import os

import tensorflow as tf

os.environ["CUDA_VISIBLE_DEVICES"] = "1"

from tensor2tensor.utils import registry

from tensor2tensor.utils import trainer_lib

from tensor2tensor.utils import t2t_model

from tensor2tensor.utils import decoding

problems = registry.list_problems()

print(problems)

hparams_set = "transformer_chat"

hparams = trainer_lib.create_hparams(hparams_set, data_dir=DATA, problem_name="transformer_chat")

MODEL = "transformer"

p_hparams = registry.problem_hparams(hparams.problems[0])

encoders = registry.problem_encoders(hparams.problems[0])

model = registry.model(MODEL)(hparams, tf.estimator.ModeKeys.PREDICT, p_hparams, None, None, encoders,

decode_hparams=decoding.decode_hparams(FLAGS))

model.create_estimator_spec

# We can create a function to encode the input

def encode(input):

"""Input is a string, output is a list of token ids"""

s = sp.EncodeAsIds(input)

return s + [text_encoder.EOS_ID]

# We can also create a function to decode the output

def decode(integers):

integers = list(np.squeeze(integers))

if 1 in integers:

integers = integers[:integers.index(1)]

return encoder.decode(np.squeeze(i

ntegers))

# We can create a function to encode a batch

def encode_example(example_str, max_len=256):

"""Encode a string as input to the model"""

example_ids = encode(example_str)

cur_len = len(example_ids)

# Padding

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur_len)

# Truncation

else:

example_ids = example_ids[:max_len-1] + [text_encoder.EOS_ID]

return np.array(example_ids, dtype=np.int32).reshape([1, -1])

def encode_batch(batch, max_len=256):

"""Encode a batch of strings"""

return [encode_example(example, max_len) for example in batch]

# We can create a function to decode a batch

def decode_batch(batch):

"""Decode a batch of outputs from the model"""

return "".join(decode(integers) for integers in batch)

# We can now create a function to generate text from the model

def generate(sess, start_string, max_len=256, temperature=1.0):

"""Generate text from the model"""

# Encode the start string

example_ids = encode(start_string)

# Add PAD_ID until we have max_len ids

cur_len = len(example_ids)

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur_len)

# Generate

batch_ids = np.array(example_ids, dtype=np.int32).reshape([1, -1]) # batch_size x length

# Run the model

predictions = sess.run(model.predictions, feed_dict={

# model.inputs[0]: batch_ids,

model.inputs[0]: batch_ids,

model.inputs[1]: np.zeros([1, max_len, 1], dtype=np.int32),

model.targets[0]: np.zeros([1, max_len], dtype=np.int32),

model.targets[1]: np.zeros([1, max_len, 1], dtype=np.int32),

model.hparams.sampling_method: "random",

model.hparams.sampling_temp: temperature,

model.hparams.random_seed: 0,

model.hparams.do_eval_only: True,

})

# Decode the predictions

decoded_predictions = decode_batch(predictions["outputs"])

# Return the decoded predictions

return decoded_predictions

# We can now create a session

sess = tf.Session(graph=model.graph)

# We can load the model

ckpt_path = tf.train.latest_checkpoint(os.path.join(DATA, MODEL, hparams_set))

model.saver.restore(sess, ckpt_path)

# And now, we can use the model

generate(sess, "The first topic")

# We can also use the model in a Flask application

from flask import Flask, request

from flask_cors import CORS

app = Flask(__name__)

CORS(app)

@app.route("/generate", methods=["GET"])

def query_example():

"""Example of a simple Flask API"""

# Query parameters

start_string = request.args.get("start_string", default="The first topic")

# Generate

generated = generate(sess, start_string)

# Return the generated text

return generated

if __name__ == "__main__":

# Run the application

app.run(debug=True)

# We can also create a TensorFlow serving API to serve the model

import os

import re

import tensorflow as tf

from tensor2tensor.utils import trainer_lib

from tensor2tensor.utils import t2t_model

from tensor2tensor.utils import registry

from tensor2tensor.utils import decoding

from tensor2tensor.models.transformer import transformer_base

import functools

tf.logging.set_verbosity(tf.logging.ERROR)

hparams_set = "transformer_chat"

MODEL = "transformer"

problems = registry.list_problems()

print(problems)

hparams = trainer_lib.create_hparams(hparams_set, data_dir=DATA, problem_name="transformer_chat")

p_hparams = registry.problem_hparams(hparams.problems[0])

encoders = registry.problem_encoders(hparams.problems[0])

model = registry.model(MODEL)(hparams, tf.estimator.ModeKeys.PREDICT, p_hparams, None, None, encoders,

decode_hparams=decoding.decode_hparams(FLAGS))

model.create_estimator_spec

# We can create a function to encode the input

def encode(input):

"""Input is a string, output is a list of token ids"""

s = sp.EncodeAsIds(input)

return s + [text_encoder.EOS_ID]

# We can also create a function to decode the output

def decode(integers):

integers = list(np.squeeze(integers))

if 1 in integers:

integers = integers[:integers.index(1)]

return encoder.decode(np.squeeze(integers))

# We can create a function to encode a batch

def encode_example(example_str, max_len=256):

"""Encode a string as input to the model"""

example_ids = encode(example_str)

cur_len = len(example_ids)

# Padding

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur

_len)

# Truncation

else:

example_ids = example_ids[:max_len - 1] + [text_encoder.EOS_ID]

return np.array(example_ids, dtype=np.int32).reshape([1, -1])

def encode_batch(batch, max_len=256):

"""Encode a batch of strings"""

return [encode_example(example, max_len) for example in batch]

# We can create a function to decode a batch

def decode_batch(batch):

"""Decode a batch of outputs from the model"""

return "".join(decode(integers) for integers in batch)

# We can now create a function to generate text from the model

def generate(sess, start_string, max_len=256, temperature=1.0):

"""Generate text from the model"""

# Encode the start string

example_ids = encode(start_string)

# Add PAD_ID until we have max_len ids

cur_len = len(example_ids)

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur_len)

# Generate

batch_ids = np.array(example_ids, dtype=np.int32).reshape([1, -1]) # batch_size x length

# Run the model

predictions = sess.run(model.predictions, feed_dict={

# model.inputs[0]: batch_ids,

model.inputs[0]: batch_ids,

model.inputs[1]: np.zeros([1, max_len, 1], dtype=np.int32),

model.targets[0]: np.zeros([1, max_len], dtype=np.int32),

model.targets[1]: np.zeros([1, max_len, 1], dtype=np.int32),

model.hparams.sampling_method: "random",

model.hparams.sampling_temp: temperature,

model.hparams.random_seed: 0,

model.hparams.do_eval_only: True,

})

# Decode the predictions

decoded_predictions = decode_batch(predictions["outputs"])

# Return the decoded predictions

return decoded_predictions

# We can now create a session

sess = tf.Session(graph=model.graph)

# We can load the model

ckpt_path = tf.train.latest_checkpoint(os.path.join(DATA, MODEL, hparams_set))

model.saver.restore(sess, ckpt_path)

# We can now create a serving input receiver

inputs = {"inputs": tf.saved_model.utils.build_tensor_info(model.inputs[0]), "targets": tf.saved_model.utils.build_tensor_info(model.targets[0])}

outputs = {"outputs": tf.saved_model.utils.build_tensor_info(model.predictions["outputs"])}

signature = tf.saved_model.signature_def_utils.build_signature_def(inputs=inputs, outputs=outputs, method_name="tensorflow/serving/predict")

# We can create a serving builder

builder = tf.saved_model.builder.SavedModelBuilder("export")

builder.add_meta_graph_and_variables(sess, [tf.saved_model.tag_constants.SERVING], signature_def_map={"serving_default": signature})

builder.save()

# We can now create a Flask application

from flask import Flask, request, jsonify

from flask_cors import CORS

import tensorflow as tf

import numpy as np

app = Flask(__name__)

CORS(app)

# We can load the model

sess = tf.Session()

meta_graph_def = tf.saved_model.loader.load(sess, [tf.saved_model.tag_constants.SERVING], "export")

signature = meta_graph_def.signature_def

# We can write a function to process the inputs

def process_inputs(inputs):

x = inputs["inputs"]

x = np.array(x, dtype=np.int32).reshape([1, -1])

y = inputs["targets"]

y = np.array(y, dtype=np.int32).reshape([1, -1])

y = np.expand_dims(y, axis=2)

return x, y

# We can create a function to process the output

def process_outputs(outputs):

return outputs["outputs"].tolist()

# We can write a function to generate text from the model

def generate(sess, start_string, max_len=256, temperature=1.0):

"""Generate text from the model"""

# Encode the start string

example_ids = encode(start_string)

# Add PAD_ID until we have max_len ids

cur_len = len(example_ids)

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur_len)

# Generate

batch_ids = np.array(example_ids, dtype=np.int32).reshape([1, -1]) # batch_size x length

# Run the model

predictions = sess.run(model.predictions, feed_dict={

# model.inputs[0]: batch_ids,

model.inputs[0]: batch_ids,

model.inputs[1]: np.zeros([1, max_len, 1], dtype=np.int32),

model.targets[0]: np.zeros([1, max_len], dtype=np.int32),

model.targets[1]: np.zeros([1, max_len, 1], dtype=np.int32),

model.hparams.sampling_method: "random",

model.hparams.sampling_temp: temperature,

model.hparams.random_seed: 0,

model.hparams.do_eval_only: True,

})

# Decode the predictions

decoded_predictions = decode_batch(predictions["outputs"])

# Return the decoded predictions

return decoded_predictions

# We can now create an API

@app.route("/generate", methods=["POST"])

def generate_text():

"""Generate text from the model"""

# Get the inputs

inputs = request.json

# Process the inputs

x, y = process_inputs(inputs)

# Generate

generated = generate(sess, x.decode())

# Return the generated text

return jsonify(generated)

# We can now run the application

if __name__ == "__main__":

app.run(debug=True)

# We can now use the TensorFlow serving API to serve the model

import os

import re

import tensorflow as tf

from tensor2tensor.utils import trainer_lib

from tensor2tensor.utils import t2t_model

from tensor2tensor.utils import registry

from tensor2tensor.utils import decoding

from tensor2tensor.models.transformer import transformer_base

import functools

tf.logging.set_verbosity(tf.logging.ERROR)

hparams_set = "transformer_chat"

MODEL = "transformer"

problems = registry.list_problems()

print(problems)

hparams = trainer_lib.create_hparams(hparams_set, data_dir=DATA, problem_name="transformer_chat")

p_hparams = registry.problem_hparams(hparams.problems[0])

encoders = registry.problem_encoders(hparams.problems[0])

model = registry.model(MODEL)(hparams, tf.estimator.ModeKeys.PREDICT, p_hparams, None, None, encoders,

decode_hparams=decoding.decode_hparams(FLAGS))

model.create_estimator_spec

# We can create a function to encode the input

def encode(input):

"""Input is a string, output is a list of token ids"""

s = sp.EncodeAsIds(input)

return s + [text_encoder.EOS_ID]

# We can also create a function to decode the output

def decode(integers):

integers = list(np.squeeze(integers))

if 1 in integers:

integers = integers[:integers.index(1)]

return encoder.decode(np.squeeze(integers))

# We can create a function to encode a batch

def encode_example(example_str, max_len=256):

"""Encode a string as input to the model"""

example_ids = encode(example_str)

cur_len = len(example_ids)

# Padding

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur_len)

# Truncation

else:

example_ids = example_ids[:max_len - 1] + [text_encoder.EOS_ID]

return np.array(example_ids, dtype=np.int32).reshape([1, -1])

def encode_batch(batch, max_len=256):

"""Encode a batch of strings"""

return [encode_example(example, max_len) for example in batch]

# We can create a function to decode a batch

def decode_batch(batch):

"""Decode a batch of outputs from the model"""

return "".join(decode(integers) for integers in batch)

# We can now create a function to generate text from the model

def generate(sess, start_string, max_len=256, temperature=1.0):

"""Generate text from the model"""

# Encode the start string

example_ids = encode(start_string)

# Add PAD_ID until we have max_len ids

cur_len = len(example_ids)

if cur_len < max_len:

example_ids += [text_encoder.PAD_ID] * (max_len - cur_len)

# Generate

batch_ids = np.array

Oui en effet, dans ce code GPT ma aussi ajouté ce qu’il faut pour plugguer un chat pour requêter ce model à la manière de chatGPT.

Je n’ai pas envie de coder pour faire ce projet, je vais laisser codex finir, et lui demander d’ajouter quelques sources de donnée en plus du forum ML, comme le forum duniter, wikipedia et stackoverflow, la doc Rust, Flutter, Python, Javascript et TypeSscript pour commencer.

Faudra laisser ça tourner quelques mois sur infra axiom.

Je lui demande aussi d’améliorer le code tensor2tensor pour qu’il soit aussi performant et stable que GPT, et de réaliser tous les tests unitaires.

Je publie ça en AGPL quand c’est finit ?

Sinon ceux qui veulent coder en Rust de zero ce que fait GPT au complet, voici le début d’explication mode text avec des bouts de codes commentés, que vous pouvez continuer d’extrapoler avec text-davinci-003: Open source IA for Duniter project (from scratch) - CodiMD